- Blog

- Dolphin imaging linkedin

- Pro evolution soccer 4 practice mode

- Parquet file extension

- 1866 patent remington rolling block rifle military

- Ultimate guitar pro backing track

- Shin chan english dub voice actors

- Whisper app confessions

- Hp dc9700 no sound

- Download game my talking hank

- Uc browser for java phones download

- Chamber trade show booth design

- Stop motion pro 7-5 download

- Yu yu hakusho opening theme lyrics

- File taxes online canada revenue agency

- Eeg test side effects

“In those situations where you’re trying to do a projection on very few columns from your entire data set, columnar is much, much better as opposed to a row-based format.” “But if you want to do row-by-row, you have to fetch millions of rows and do the operation on each of the rows,” Shahdadpuri says. (Source: Nexla whitepaper “An Introduction to Big Data Formats: Understanding Avro, Parquet, and ORC.”) If the salary and location data sets are stored in a column-oriented manner, then it’s a relatively simple query that only needs to touch data in those two columns. In order to explain the difference between row and column storage, Shahdadpuri used an example of a big company with a million employees, where an executive wants to find the salaries paid to workers grouped by each location. By their very nature, column-oriented data stores are optimized for read-heavy analytical workloads, while row-based databases are best for write-heavy transactional workloads.

Parquet and ORC both store data in columns, while Avro stores data in a row-based format.

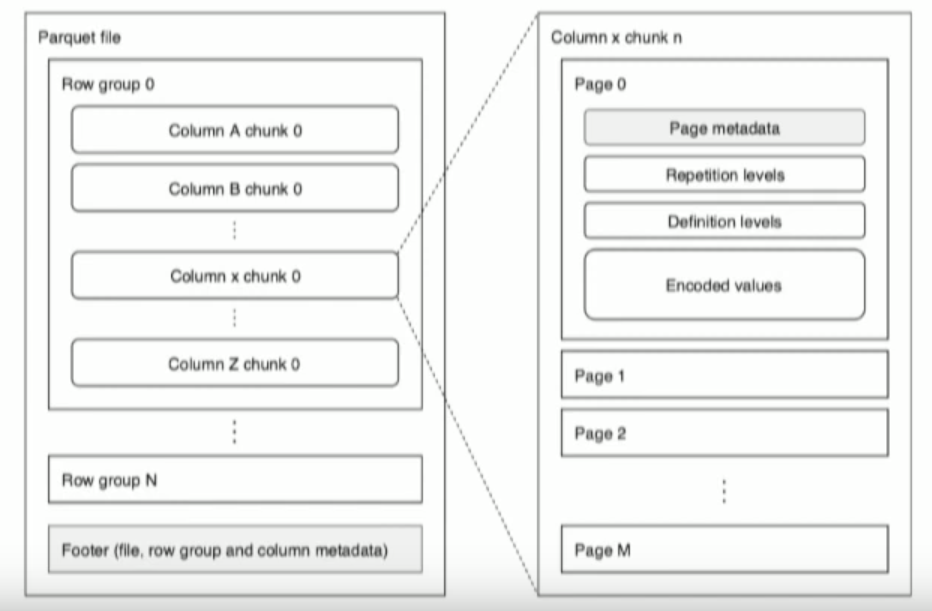

The biggest difference between ORC, Avro, and Parquet is how the store the data. In addition to being file formats, ORC, Parquet, and Avro are also on-the-wire formats, which means you can use them to pass data between nodes in your Hadoop cluster. You can take an ORC, Parquet, or Avro file from one cluster and load it on a completely different machine, and the machine will know what the data is and be able to process it. You cannot split JSON and XML files, and that limits their scalability and parallelism.Īll three formats carry the data schema in the files themselves, which is to say they’re self-described. If you need a human-readable format like JSON or XML, then you should probably re-consider why you’re using Hadoop in the first place.įiles stored in ORC, Parquet, and Avro formats can be split across multiple disks, which lends themselves to scalability and parallel processing. ORC, Parquet, and Avro are also machine-readable binary formats, which is to say that the files look like gibberish to humans. ORC is a row-column format developed by Hortonworks for storing data processed by Hive When you’re spending tens of thousands of dollars to buy a distributed disk system to store terabytes or petabytes of data, compression is a very important factor. What’s The SameĪll three of the formats are optimized for storage on Hadoop, and provide some degree of compression. Nexla CTO and co-founder Jeff Williams and Avinash Shahdadpuri, Nexla’s head of data and infrastructure were kind enough to explain to Datanami what’s going on with ORC, Avro, and Parquet. To get the low down on this high tech, we tapped the knowledge of the smart folks at Nexla, a developer of tools for managing data and converting formats. While these file formats share some similarities, each of them are unique and bring their own relative advantages and disadvantages. Luckily for you, the big data community has basically settled on three optimized file formats for use in Hadoop clusters: Optimized Row Columnar (ORC), Avro, and Parquet. Since you’re using Hadoop in the first place, it’s likely that storage efficiency and parallelism are high on the list of priorities, which means you need something else. Plus, those file formats cannot be stored in a parallel manner. In fact, storing data in Hadoop using those raw formats is terribly inefficient. The data may arrive in your Hadoop cluster in a human readable format like JSON or XML, or as a CSV file, but that doesn’t mean that’s the best way to actually store data. You have many choices when it comes to storing and processing data on Hadoop, which can be both a blessing and a curse.

You can store all types of structured, semi-structure, and unstructured data within the Hadoop Distributed File System, and process it in a variety of ways using Hive, HBase, Spark, and many other engines. The good news is Hadoop is one of the most cost-effective ways to store huge amounts of data. Then the question hits you: How are you going to store all this data so they can actually use it? So you’re filling your Hadoop cluster with reams of raw data, and your data analysts and scientists are champing at the bit to get started.